文章目录

- 1. 常用库安装

- 2. 基础爬虫开发

- 2.1. 使用 requests 获取网页内容

- 2.2. 使用 BeautifulSoup 解析 HTML

- 2.3. 处理登录与会话

- 3. 进阶爬虫开发

- 3.1. 处理动态加载内容(Selenium)

- 3.2. 使用Scrapy框架

- 3.3. 分布式爬虫(Scrapy-Redis)

- 4. 爬虫优化与反反爬策略

- 4.1. 常见反爬机制及应对

- 4.2. 代理IP使用示例

- 4.3. 随机延迟与请求头

BeautifulSoup 官方文档

https://beautifulsoup.readthedocs.io/zh-cn/v4.4.0/

https://cloud.tencent.com/developer/article/1193258

https://blog.csdn.net/zcs2312852665/article/details/144804553

参考:

https://blog.51cto.com/haiyongblog/13806452

1. 常用库安装

pip install requests beautifulsoup4 scrapy selenium pandas

2. 基础爬虫开发

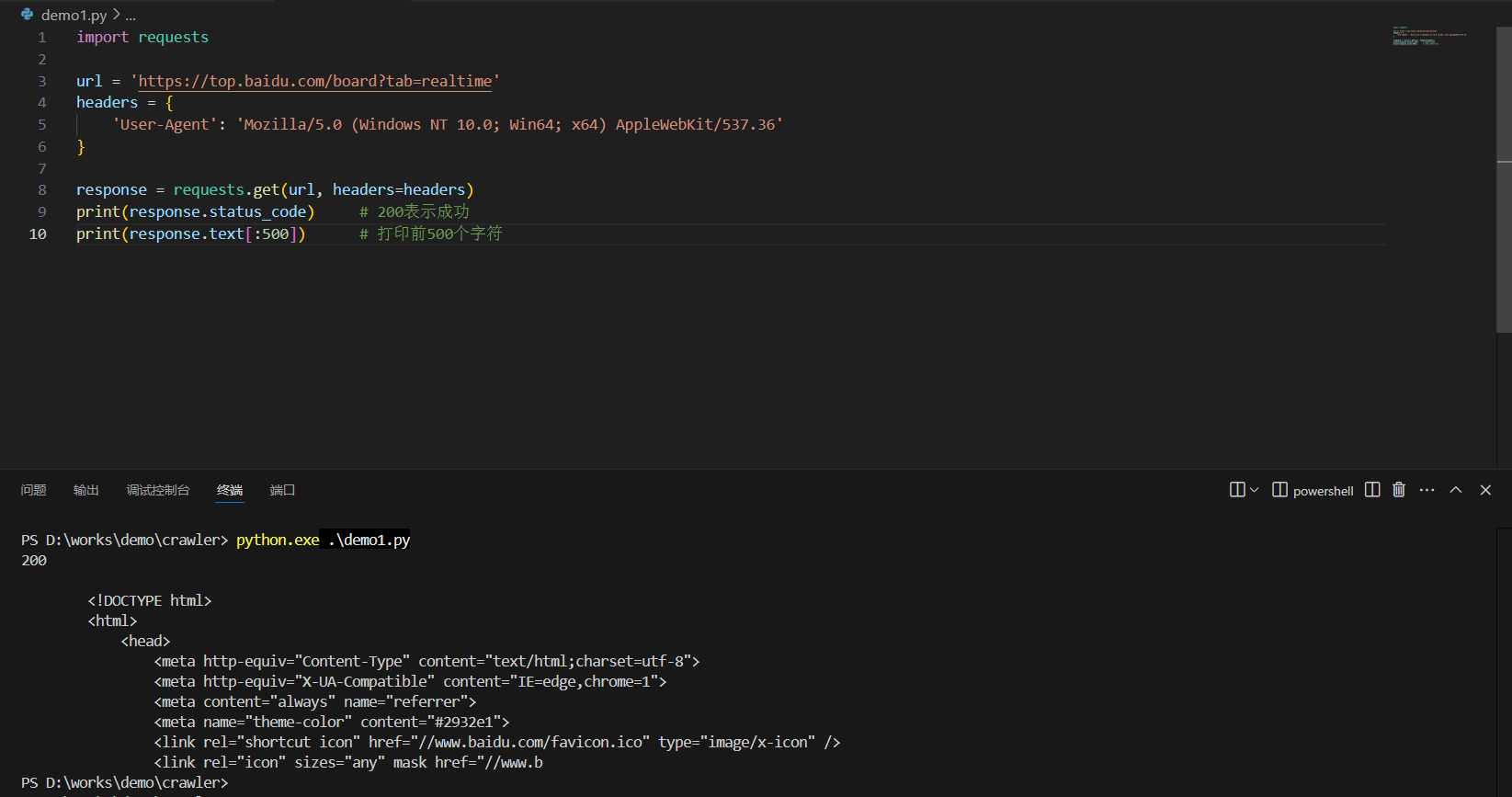

2.1. 使用 requests 获取网页内容

import requestsurl = 'https://top.baidu.com/board?tab=realtime'

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36'

}response = requests.get(url, headers=headers)

print(response.status_code) # 200表示成功

print(response.text[:500]) # 打印前500个字符

2.2. 使用 BeautifulSoup 解析 HTML

from bs4 import BeautifulSouphtml_doc = """<html><head><title>测试页面</title></head><body><p class="title"><b>示例网站</b></p><p class="story">这是一个示例页面<a href="http://example.com/1" class="link" id="link1">链接1</a><a href="http://example.com/2" class="link" id="link2">链接2</a></p>"""soup = BeautifulSoup(html_doc, 'html.parser')# 获取标题

print(soup.title.string)# 获取所有链接

for link in soup.find_all('a'):print(link.get('href'), link.string)# 通过CSS类查找

print(soup.find('p', class_='title').text)

2.3. 处理登录与会话

import requestslogin_url = 'https://example.com/login'

target_url = 'https://example.com/dashboard'session = requests.Session()# 登录请求

login_data = {'username': 'your_username','password': 'your_password'

}response = session.post(login_url, data=login_data)if response.status_code == 200:# 访问需要登录的页面dashboard = session.get(target_url)print(dashboard.text)

else:print('登录失败')

3. 进阶爬虫开发

3.1. 处理动态加载内容(Selenium)

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.chrome.service import Service

from webdriver_manager.chrome import ChromeDriverManager# 设置无头浏览器

options = webdriver.ChromeOptions()

options.add_argument('--headless') # 无界面模式

options.add_argument('--disable-gpu')# 自动下载chromedriver

driver = webdriver.Chrome(service=Service(ChromeDriverManager().install()), options=options)url = 'https://dynamic-website.com'

driver.get(url)# 等待元素加载(隐式等待)

driver.implicitly_wait(10)# 获取动态内容

dynamic_content = driver.find_element(By.CLASS_NAME, 'dynamic-content')

print(dynamic_content.text)driver.quit()

3.2. 使用Scrapy框架

# 创建Scrapy项目

# scrapy startproject example_project

# cd example_project

# scrapy genspider example example.com# 示例spider代码

import scrapyclass ExampleSpider(scrapy.Spider):name = 'example'allowed_domains = ['example.com']start_urls = ['http://example.com/']def parse(self, response):# 提取数据title = response.css('title::text').get()links = response.css('a::attr(href)').getall()yield {'title': title,'links': links}# 运行爬虫

# scrapy crawl example -o output.json

3.3. 分布式爬虫(Scrapy-Redis)

# settings.py配置

SCHEDULER = "scrapy_redis.scheduler.Scheduler"

DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter"

REDIS_URL = 'redis://localhost:6379'# spider代码

from scrapy_redis.spiders import RedisSpiderclass MyDistributedSpider(RedisSpider):name = 'distributed_spider'redis_key = 'spider:start_urls'def parse(self, response):# 解析逻辑pass

4. 爬虫优化与反反爬策略

4.1. 常见反爬机制及应对

User-Agent检测 :随机切换User-Agent

IP限制:使用代理IP池

验证码:OCR识别或打码平台

行为分析:模拟人类操作间隔

JavaScript渲染:使用Selenium或Pyppeteer

4.2. 代理IP使用示例

import requestsproxies = {'http': 'http://proxy_ip:port','https': 'https://proxy_ip:port'

}try:response = requests.get('https://example.com', proxies=proxies, timeout=5)print(response.text)

except Exception as e:print(f'请求失败: {e}')

4.3. 随机延迟与请求头

import random

import time

import requests

from fake_useragent import UserAgentua = UserAgent()def random_delay():time.sleep(random.uniform(0.5, 2.5))def get_with_random_headers(url):headers = {'User-Agent': ua.random,'Accept-Language': 'en-US,en;q=0.5','Referer': 'https://www.google.com/'}random_delay()return requests.get(url, headers=headers)

- 使用Python操作Redis详解)

Java/python/JavaScript/C++/C/GO六种最佳实现)

Java/python/JavaScript/C/C++/GO最佳实现)

用于车辆仿真)